前言

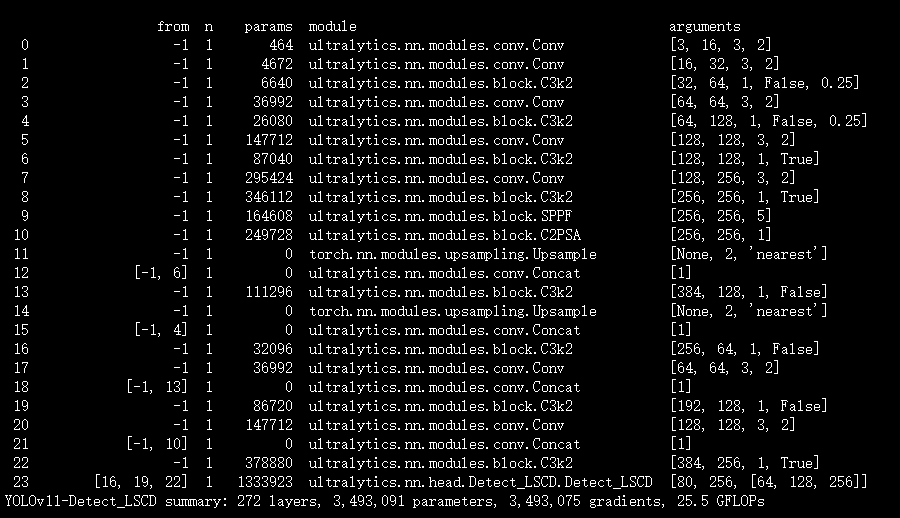

本文介绍了基于群归一化(GroupNorm)和共享卷积的轻量化检测头LSCD,并将其集成进YOLOv11。LSCD检测头充分利用了GroupNorm和共享卷积的优势,在保持特征信息有效融合的同时,减少计算量和运算复杂度。我们将LSCD的代码集成到YOLOv11检测头中,并在tasks文件中进行注册。实验证明,YOLOv11-LSCD在目标检测任务中取得了良好的效果。

文章目录: YOLOv11改进大全:卷积层、轻量化、注意力机制、损失函数、Backbone、SPPF、Neck、检测头全方位优化汇总

专栏链接: YOLOv11改进专栏

@

- 前言

- 原理介绍

- 核心代码

- 实验

- 脚本

- 结果

原理介绍

参考文章:

https://pdf.hanspub.org/csa2024149_51543326.pdf

https://www.mdpi.com/2079-9292/13/16/3216

http://www.tcsae.org/cn/article/pdf/preview/10.11975/j.issn.1002-6819.202408065.pdf

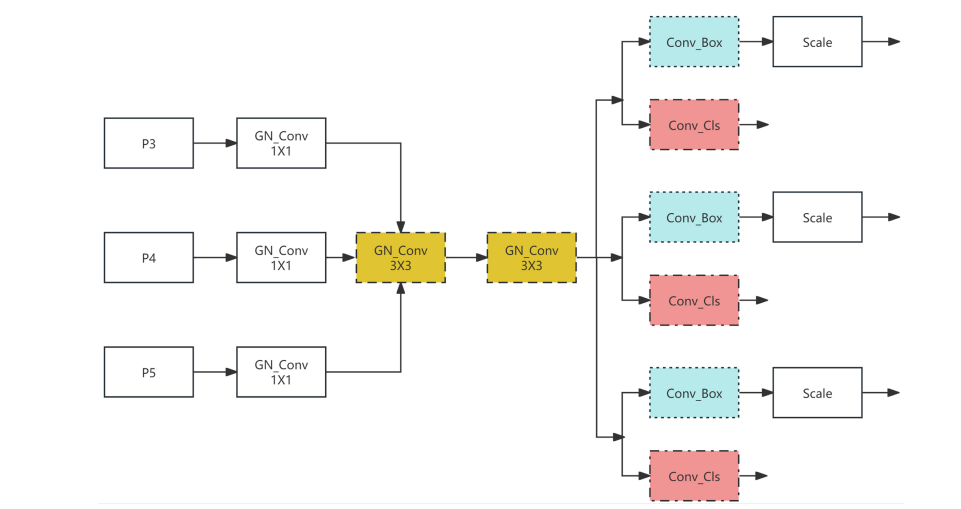

基于群归一化 GroupNorm 和共享卷积的轻量化检测头 Lightweight Shared Convolutional Detection-LSCD,其网络结构如图 5 所示。LSCD 检测头充分利用了 GroupNorm 和共享卷积的优 势,能够在保持特征信息有效融合的情况下,尽量减少计算量和运算复杂度。 Figure 5. LSCD detection head structure 图 5. LSCD 检测头结构 。

如下图 所示的 LSCD 检测头网络结构中,GN_Conv 1 × 1 模块表示的是群归一化 GroupNorm + 卷积 Conv 操作,其中 1 × 1 表示卷积核大小为 1 × 1。两个黄色群归一化卷积模块 GN_Conv 3 × 3 共享权重, 三个蓝色预测框卷积模块 Conv_Box 共享权重,三个红色分类卷积模块 Conv_Cls 共享权重。在每个 Conv_Box 模块后面连接着 Scale 模块,Scale 模块主要是一个缩放系数,用来匹配对不同尺度目标的检测。

核心代码

代码来源:https://github.com/z1069614715/ultralytics-face/blob/77e06c7fbf452ff09efe6ec4fbd9f1fb665d6ac8/ultralytics/nn/modules/head.py#L517

class Detect_LSCD(nn.Module):# Lightweight Shared Convolutional Detection Head"""YOLOv8 Detect head for detection models."""dynamic = False # force grid reconstructionexport = False # export modeshape = Noneanchors = torch.empty(0) # initstrides = torch.empty(0) # initdef __init__(self, nc=80, hidc=256, ch=()):"""Initializes the YOLOv8 detection layer with specified number of classes and channels."""super().__init__()self.nc = nc # number of classesself.nl = len(ch) # number of detection layersself.reg_max = 16 # DFL channels (ch[0] // 16 to scale 4/8/12/16/20 for n/s/m/l/x)self.no = nc + self.reg_max * 4 # number of outputs per anchorself.stride = torch.zeros(self.nl) # strides computed during buildself.conv = nn.ModuleList(nn.Sequential(Conv_GN(x, hidc, 1)) for x in ch)self.share_conv = nn.Sequential(Conv_GN(hidc, hidc, 3), Conv_GN(hidc, hidc, 3))self.cv2 = nn.Conv2d(hidc, 4 * self.reg_max, 1)self.cv3 = nn.Conv2d(hidc, self.nc, 1)self.scale = nn.ModuleList(Scale(1.0) for x in ch)self.dfl = DFL(self.reg_max) if self.reg_max > 1 else nn.Identity()def forward(self, x):"""Concatenates and returns predicted bounding boxes and class probabilities."""for i in range(self.nl):x[i] = self.conv[i](x[i])x[i] = self.share_conv(x[i])x[i] = torch.cat((self.scale[i](self.cv2(x[i])), self.cv3(x[i])), 1)if self.training: # Training pathreturn x# Inference pathshape = x[0].shape # BCHWx_cat = torch.cat([xi.view(shape[0], self.no, -1) for xi in x], 2)if self.dynamic or self.shape != shape:self.anchors, self.strides = (x.transpose(0, 1) for x in make_anchors(x, self.stride, 0.5))self.shape = shapeif self.export and self.format in ("saved_model", "pb", "tflite", "edgetpu", "tfjs"): # avoid TF FlexSplitV opsbox = x_cat[:, : self.reg_max * 4]cls = x_cat[:, self.reg_max * 4 :]else:box, cls = x_cat.split((self.reg_max * 4, self.nc), 1)dbox = self.decode_bboxes(box)if self.export and self.format in ("tflite", "edgetpu"):# Precompute normalization factor to increase numerical stability# See https://github.com/ultralytics/ultralytics/issues/7371img_h = shape[2]img_w = shape[3]img_size = torch.tensor([img_w, img_h, img_w, img_h], device=box.device).reshape(1, 4, 1)norm = self.strides / (self.stride[0] * img_size)dbox = dist2bbox(self.dfl(box) * norm, self.anchors.unsqueeze(0) * norm[:, :2], xywh=True, dim=1)y = torch.cat((dbox, cls.sigmoid()), 1)return y if self.export else (y, x)def bias_init(self):"""Initialize Detect() biases, WARNING: requires stride availability."""m = self # self.model[-1] # Detect() module# cf = torch.bincount(torch.tensor(np.concatenate(dataset.labels, 0)[:, 0]).long(), minlength=nc) + 1# ncf = math.log(0.6 / (m.nc - 0.999999)) if cf is None else torch.log(cf / cf.sum()) # nominal class frequency# for a, b, s in zip(m.cv2, m.cv3, m.stride): # fromm.cv2.bias.data[:] = 1.0 # boxm.cv3.bias.data[: m.nc] = math.log(5 / m.nc / (640 / 16) ** 2) # cls (.01 objects, 80 classes, 640 img)def decode_bboxes(self, bboxes):"""Decode bounding boxes."""return dist2bbox(self.dfl(bboxes), self.anchors.unsqueeze(0), xywh=True, dim=1) * self.stridesclass Segment_LSCD(Detect_LSCD):"""YOLOv8 Segment head for segmentation models."""def __init__(self, nc=80, nm=32, npr=256, hidc=256, ch=()):"""Initialize the YOLO model attributes such as the number of masks, prototypes, and the convolution layers."""super().__init__(nc, hidc, ch)self.nm = nm # number of masksself.npr = npr # number of protosself.proto = Proto(ch[0], self.npr, self.nm) # protosself.detect = Detect_LSCD.forwardc4 = max(ch[0] // 4, self.nm)self.cv4 = nn.ModuleList(nn.Sequential(Conv_GN(x, c4, 1), Conv_GN(c4, c4, 3), nn.Conv2d(c4, self.nm, 1)) for x in ch)def forward(self, x):"""Return model outputs and mask coefficients if training, otherwise return outputs and mask coefficients."""p = self.proto(x[0]) # mask protosbs = p.shape[0] # batch sizemc = torch.cat([self.cv4[i](x[i]).view(bs, self.nm, -1) for i in range(self.nl)], 2) # mask coefficientsx = self.detect(self, x)if self.training:return x, mc, preturn (torch.cat([x, mc], 1), p) if self.export else (torch.cat([x[0], mc], 1), (x[1], mc, p))class Pose_LSCD(Detect_LSCD):"""YOLOv8 Pose head for keypoints models."""def __init__(self, nc=80, kpt_shape=(17, 3), hidc=256, ch=()):"""Initialize YOLO network with default parameters and Convolutional Layers."""super().__init__(nc, hidc, ch)self.kpt_shape = kpt_shape # number of keypoints, number of dims (2 for x,y or 3 for x,y,visible)self.nk = kpt_shape[0] * kpt_shape[1] # number of keypoints totalself.detect = Detect_LSCD.forwardc4 = max(ch[0] // 4, self.nk)self.cv4 = nn.ModuleList(nn.Sequential(Conv(x, c4, 1), Conv(c4, c4, 3), nn.Conv2d(c4, self.nk, 1)) for x in ch)def forward(self, x):"""Perform forward pass through YOLO model and return predictions."""bs = x[0].shape[0] # batch sizekpt = torch.cat([self.cv4[i](x[i]).view(bs, self.nk, -1) for i in range(self.nl)], -1) # (bs, 17*3, h*w)x = self.detect(self, x)if self.training:return x, kptpred_kpt = self.kpts_decode(bs, kpt)return torch.cat([x, pred_kpt], 1) if self.export else (torch.cat([x[0], pred_kpt], 1), (x[1], kpt))def kpts_decode(self, bs, kpts):"""Decodes keypoints."""ndim = self.kpt_shape[1]if self.export: # required for TFLite export to avoid 'PLACEHOLDER_FOR_GREATER_OP_CODES' bugy = kpts.view(bs, *self.kpt_shape, -1)a = (y[:, :, :2] * 2.0 + (self.anchors - 0.5)) * self.stridesif ndim == 3:a = torch.cat((a, y[:, :, 2:3].sigmoid()), 2)return a.view(bs, self.nk, -1)else:y = kpts.clone()if ndim == 3:y[:, 2::3] = y[:, 2::3].sigmoid() # sigmoid (WARNING: inplace .sigmoid_() Apple MPS bug)y[:, 0::ndim] = (y[:, 0::ndim] * 2.0 + (self.anchors[0] - 0.5)) * self.stridesy[:, 1::ndim] = (y[:, 1::ndim] * 2.0 + (self.anchors[1] - 0.5)) * self.stridesreturn yclass OBB_LSCD(Detect_LSCD):"""YOLOv8 OBB detection head for detection with rotation models."""def __init__(self, nc=80, ne=1, hidc=256, ch=()):"""Initialize OBB with number of classes `nc` and layer channels `ch`."""super().__init__(nc, hidc, ch)self.ne = ne # number of extra parametersself.detect = Detect_LSCD.forwardc4 = max(ch[0] // 4, self.ne)self.cv4 = nn.ModuleList(nn.Sequential(Conv_GN(x, c4, 1), Conv_GN(c4, c4, 3), nn.Conv2d(c4, self.ne, 1)) for x in ch)def forward(self, x):"""Concatenates and returns predicted bounding boxes and class probabilities."""bs = x[0].shape[0] # batch sizeangle = torch.cat([self.cv4[i](x[i]).view(bs, self.ne, -1) for i in range(self.nl)], 2) # OBB theta logits# NOTE: set `angle` as an attribute so that `decode_bboxes` could use it.angle = (angle.sigmoid() - 0.25) * math.pi # [-pi/4, 3pi/4]# angle = angle.sigmoid() * math.pi / 2 # [0, pi/2]if not self.training:self.angle = anglex = self.detect(self, x)if self.training:return x, anglereturn torch.cat([x, angle], 1) if self.export else (torch.cat([x[0], angle], 1), (x[1], angle))def decode_bboxes(self, bboxes):"""Decode rotated bounding boxes."""return dist2rbox(self.dfl(bboxes), self.angle, self.anchors.unsqueeze(0), dim=1) * self.strides实验

脚本

import warnings

warnings.filterwarnings('ignore')

from ultralytics import YOLOif __name__ == '__main__':

# 修改为自己的配置文件地址model = YOLO('/root/ultralytics-main/ultralytics/cfg/models/11/yolov11-Detect_LSCD.yaml')

# 修改为自己的数据集地址model.train(data='/root/ultralytics-main/ultralytics/cfg/datasets/coco8.yaml',cache=False,imgsz=640,epochs=10,single_cls=False, # 是否是单类别检测batch=8,close_mosaic=10,workers=0,optimizer='SGD',amp=True,project='runs/train',name='Detect_LSCD',)结果